Sound synthesis with computers is often described as a Turing test or “imitation game”. In this context, a passing test is regarded by some as evidence of machine intelligence and by others as damage to human musicianship. Yet, both sides agree to judge synthesizers on a perceptual scale from fake to real. My article rejects this premise and borrows from philosopher Clément Rosset’s “L’Objet singulier” (1979) and “Fantasmagories” (2006) to affirm (1) the reality of all music, (2) the infidelity of all audio data, and (3) the impossibility of strictly repeating sensations. Compared to analog tape manipulation, deep generative models are neither more nor less unfaithful. In both cases, what is at stake is not to deny reality via illusion but to cultivate imagination as “function of the unreal” (Bachelard); i.e., a precise aesthetic grip on reality. Meanwhile, i insist that digital music machines are real objects within real human societies: their performance on imitation games should not exonerate us from studying their social and ecological impacts.

Tag: music

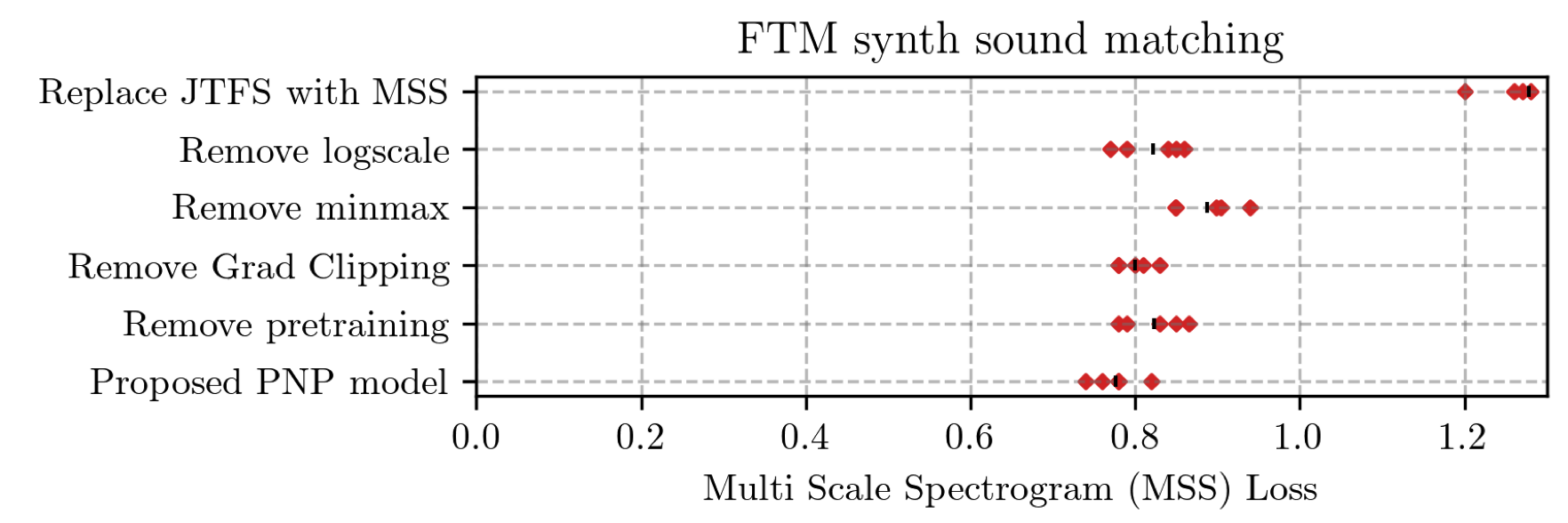

Learning to Solve Inverse Problems for Perceptual Sound Matching @ IEEE TASLP

Perceptual sound matching (PSM) aims to find the input parameters to a synthesizer so as to best imitate an audio target. Deep learning for PSM optimizes a neural network to analyze and reconstruct prerecorded samples. In this context, our article addresses the problem of designing a suitable loss function when the training set is generated by a differentiable synthesizer. Our main contribution is perceptual–neural–physical loss (PNP), which aims at addressing a tradeoff between perceptual relevance and computational efficiency. The key idea behind PNP is to linearize the effect of synthesis parameters upon auditory features in the vicinity of each training sample. The linearization procedure is massively parallelizable, can be precomputed, and offers a 100-fold speedup during gradient descent compared to differentiable digital signal processing (DDSP). We show that PNP is able to accelerate DDSP with joint time–frequency scattering transform (JTFS) as auditory feature while preserving its perceptual fidelity. Additionally, we evaluate the impact of other design choices in PSM: parameter rescaling, pretraining, auditory representation, and gradient clipping. We report state-of-the-art results on both datasets and find that PNP-accelerated JTFS has greater influence on PSM performance than any other design choice.

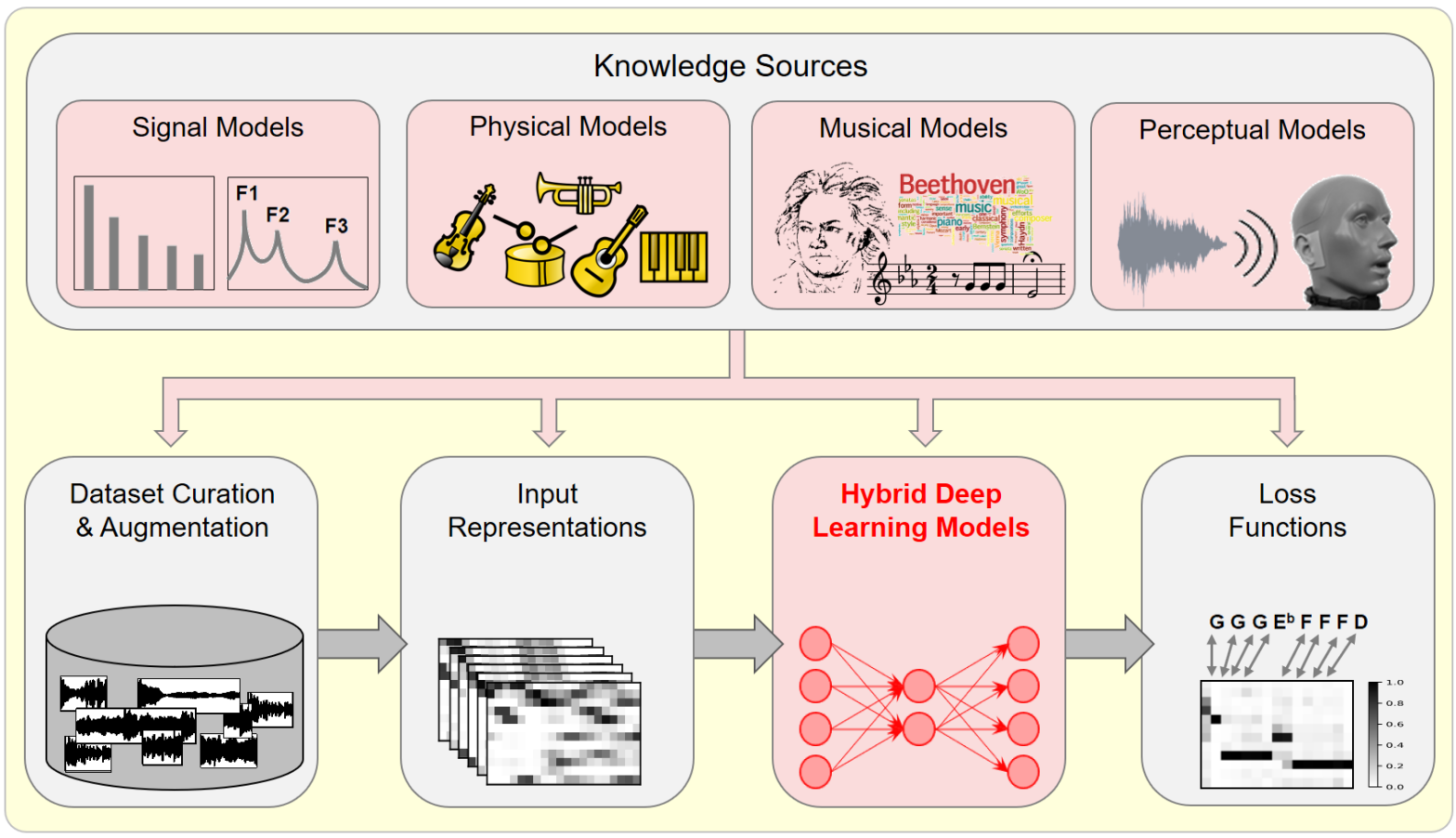

Model-Based Deep Learning for Music Information Research @ IEEE SPM

In this article, we investigate the notion of model-based deep learning in the realm of music information research (MIR). Loosely speaking, we refer to the term model-based deep learning for approaches that combine traditional knowledge-based methods with data-driven techniques, especially those based on deep learning, within a diff erentiable computing framework. In music, prior knowledge for instance related to sound production, music perception or music composition theory can be incorporated into the design of neural networks and associated loss functions. We outline three specific scenarios to illustrate the application of model-based deep learning in MIR, demonstrating the implementation of such concepts and their potential.

Action “Musiscale” au symposium du GDR MaDICS

Le 30 mai 2024 à Blois, se tenait le sixième symposium du GDR MaDICS : masses de données, informations et connaissances en sciences. Dans le cadre de l’action “Musiscale : modélisation multi-échelles de masses de données musicales”, j’ai présenté les travaux de l’équipe sur la diffusion en ondelettes (scattering transform) ainsi que sur les réseaux de neurones multirésolution (MuReNN pour multi-resolution neural networks).

Towards multisensory control of physical modeling synthesis @ Inter-Noise

Physical models of musical instruments offer an interesting tradeoff between computational efficiency and perceptual fidelity. Yet, they depend on a multidimensional space of user-defined parameters whose exploration by trial and error is impractical. Our article addresses this issue by combining two ideas: query by example and gestural control. On one hand, we train a deep… Continue reading Towards multisensory control of physical modeling synthesis @ Inter-Noise

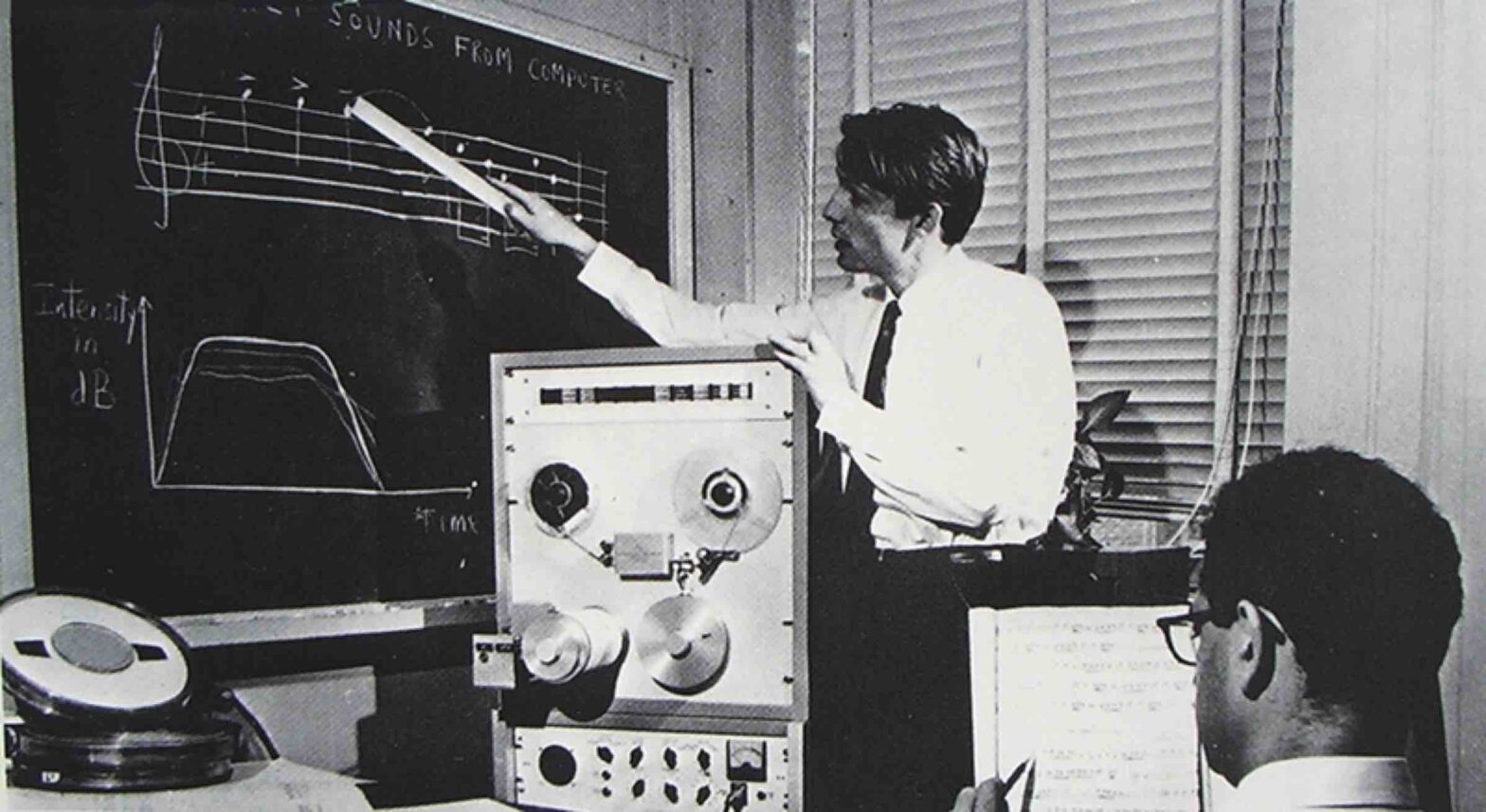

Structure Versus Randomness in Computer Music and the Scientific Legacy of Jean-Claude Risset @ JIM

According to Jean-Claude Risset (1938–2016), “art and science bring about complementary kinds of knowledge”. In 1969, he presented his piece Mutations as “[attempting] to explore […] some of the possibilities offered by the computer to compose at the very level of sound—to compose sound itself, so to speak.” In this article, I propose to take the same motto as a starting point, yet while adopting a mathematical and technological outlook, more so than a musicological one.

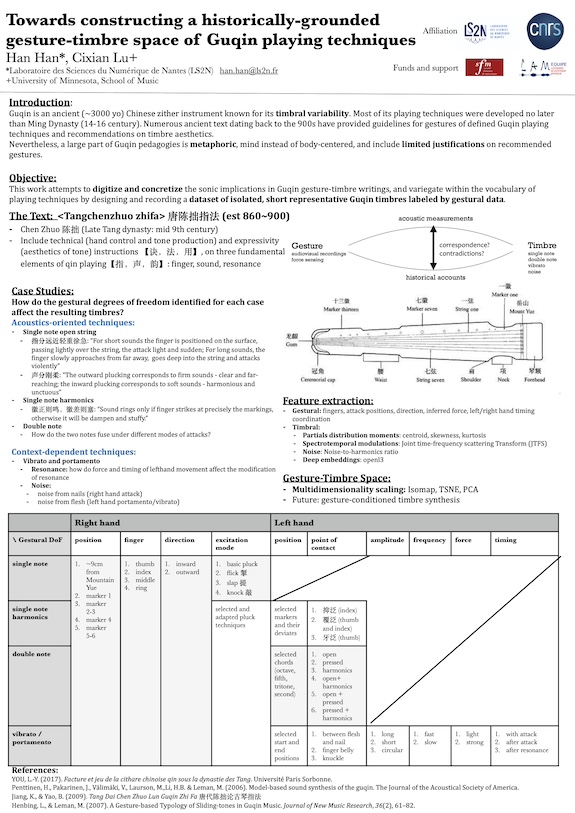

Towards constructing a historically grounded gesture-timbre space of Guqin playing techniques @ Timbre

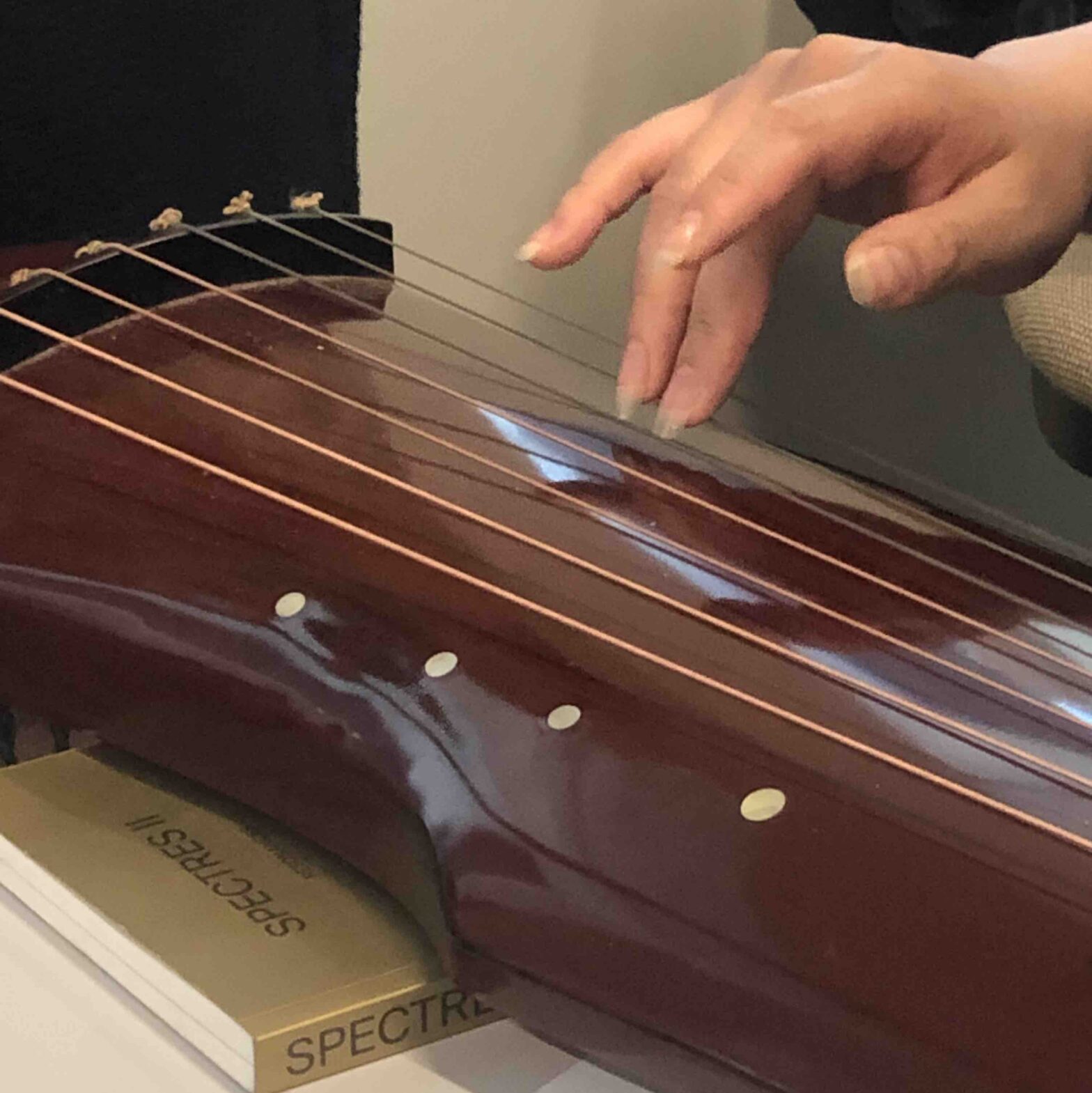

Guqin is an ancient Chinese zither instrument known for its timbral variability and the vital role timbre, as opposed to melody or rhythm, played in its classical compositions. Numerous ancient texts dating back to the 1500s provided gestural guidelines of defined Guqin playing techniques and recommendations on timbre aesthetics. It’s also suggested in these texts that small deviations in gestures have significant impact on resulting timbres. Nevertheless, traditionally and even today, Guqin pedagogies are largely metaphoric, mind instead of body, and include limited elaboration on recommended gestures. To digitize and concretize the sonic implications in Guqin gesture-timbre writings, and variegate within the oversimplified vocabulary of playing techniques, this study aims to design and record a dataset of isolated, short, representative Guqin sounds labeled by gestural data. The sounds in question are curated by extracting ancient text, where emphasis on gesture-induced timbral difference is mentioned. We decompose the notion of gesture into nine degrees of freedom for both hands, including left/right hand position, fingers used, point of contact, left/right hand temporal coordination, etc. We define a ladder of gestural data at various levels, ranging from discrete labels of playing techniques, the aforementioned degrees of freedom to continuous signals acquired by high-speed camera with automatic hand-tracking system. We analyze in time-frequency domain timbres resulting from conventional playing gestures and their systematically “perturbed” versions. We investigate the correlation between timbres and their underlying gestures, via methods derived from multidimensional scaling.

Le guqin : vers une cartographie gestuelle et timbrale

Le 1er décembre 2023 à 14h45, Han Han présentera son projet sur la cartographie gestuelle et timbrale du guqin (cithare chinoise) à la bibliothèque La Grange Fleuret, au 11 bis rue de Vézelay à Paris.

Ce projet s’inscrit dans les “collaborations entre jeunes chercheuses et artistes” et est soutenu par l’association française d’informatique musicale (AFIM) et la société française d’ethnomusicologie (SFE).

Kymatio notebooks @ ISMIR 2023

On November 5th, 2023, we hosted a tutorial on Kymatio, entitled “Deep Learning meets Wavelet Theory for Music Signal Processing”, as part of the International Society for Music Information Retrieval (ISMIR) conference in Milan, Italy.

The Jupyter notebooks below were authored by Chris Mitcheltree and Cyrus Vahidi from Queen Mary University of London.

16 novembre 2023 : journée GdR ISIS “Traitement du signal pour la musique”

Dans le cadre de l’action « Traitement du signal pour l’audio et l’écoute artificielle » du GdR ISIS, nous organisons, le Jeudi 16 Novembre 2023 à l’IRCAM, une troisième journée dédiée au traitement des signaux de musique