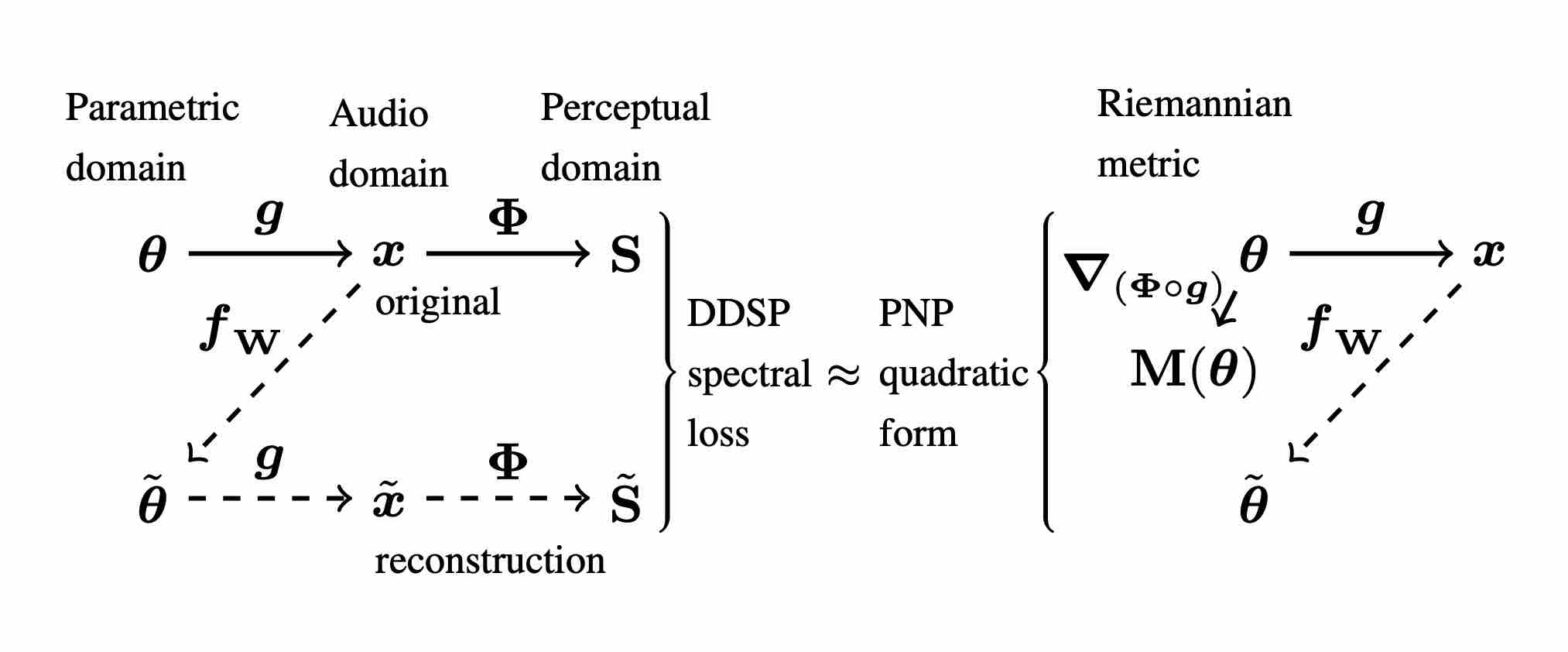

Perceptual–Neural–Physical Sound Matching

Communications dans un congrès

Auteurs : Han Han, Vincent Lostanlen, Mathieu Lagrange.

Conférence : Proceedings of the International Conference on Acoustics, Speech, and Signal Processing

Date de publication : 2023

Sound matchingAuditory similarityScattering transformDeep convolutional networksPhysical modeling synthesis