A hybrid filterbanks is a convolutional neural network (convnet) whose learnable filters operate over the subbands of a non-learnable filterbank, which is designed from domain knowledge. While hybrid filterbanks have found successful applications in speech enhancement, our paper shows that they remain susceptible to large deviations of the energy response due to randomness of convnet weights at initialization. Against this issue, we propose a variant of hybrid filterbanks, by inspiration from residual neural networks (ResNets). The key idea is to introduce a shortcut connection at the output of each non-learnable filter, bypassing the convnet. We prove that the shortcut connection in a residual hybrid filterbank lowers the relative standard deviation of the energy response while the pairwise cosine distances between non-learnable filters contributes to preventing duplicate features.

Category: Publications

Robust Deconvolution with Parseval Filterbanks @ IEEE SampTA

This article introduces two contributions: Multiband Robust Deconvolution (Multi-RDCP), a regularization approach for deconvolution in the presence of noise; and Subband-Normalized Adaptive Kernel Evaluation (SNAKE), a first-order iterative algorithm designed to efficiently solve the resulting optimization problem. Multi-RDCP resembles Group LASSO in that it promotes sparsity across the subband spectrum of the solution. We prove that SNAKE enjoys fast convergence rates and numerical simulations illustrate the efficiency of SNAKE for deconvolving noisy oscillatory signals.

Le streaming comme infrastructure et comme mode de vie @ RNRM

L’enquête sur l’impact écologique du streaming musical révèle deux angles d’analyse : l’un fondé sur l’infrastructure matérielle, l’autre sur l’évolution des modes de vie. À l’heure où les architectures de choix sont de plus en plus verrouillées autour d’un petit nombre de géants du numérique, l’enjeu de cette enquête réside dans une complémentarité entre méthodes quantitatives et méthodes qualitatives, ainsi que dans une interdisciplinarité entre sciences du numérique, sciences humaines et sociales et sciences du système Terre. Dans ce contexte, critiquer l’insoutenabilité du streaming ne signifie pas s’en remettre à une innovation technologique qui pourrait soudain « verdir » la filière dans son ensemble. Bien plutôt, il s’agit de dénoncer et contester l’utopie d’une musique intégralement disponible, pour tout le monde, partout, tout de suite. Pour se rendre crédibles, les scénarios alternatifs au statu quo doivent définir, dans un même geste technocritique, quel mode de vie ils promeuvent et quelle infrastructure ils maintiendront.

Robust Multicomponent Tracking of Ultrasonic Vocalizations @ IEEE ICASSP

Ultrasonic vocalizations (USV) convey information about individual identity and arousal status in mice. We propose to track USV as ridges in the time-frequency domain via a variant of timefrequency reassignment (TFR). The key idea is to perform TFR with empirical Wiener shrinkage and multitapering to improve robustness to noise. Furthermore, we perform TFR over both the short-term Fourier transform and the constant-Q transform so as to detect both the fundamental frequency and its harmonic partial (if any). Experimental results show that our approach effectively estimates multicomponent ridges with high precision and low frequency deviation.

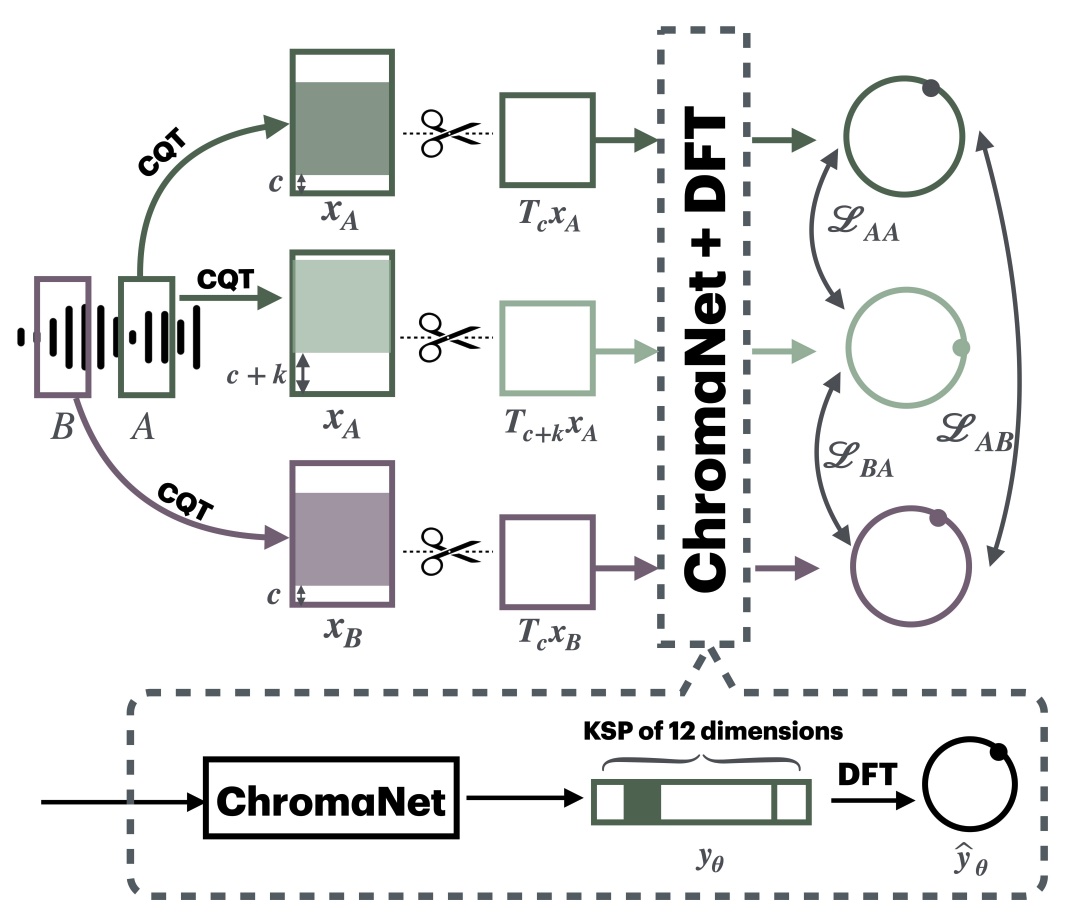

S-KEY: Self-Supervised Learning of Major and Minor Keys from Audio @ IEEE ICASSP

STONE, the current method in self-supervised learning for tonality estimation in music signals, cannot distinguish relative keys, such as C major versus A minor. In this article, we extend the neural network architecture and learning objective of STONE to perform self-supervised learning of major and minor keys (S-KEY). Our main contribution is an auxiliary pretext task to STONE, formulated using transposition-invariant chroma features as a source of pseudo-labels. S-KEY matches the supervised state of the art in tonality estimation on FMAKv2 and GTZAN datasets while requiring no human annotation and having the same parameter budget as STONE. We build upon this result and expand the training set of S-KEY to a million songs, thus showing the potential of large-scale self-supervised learning in music information retrieval.

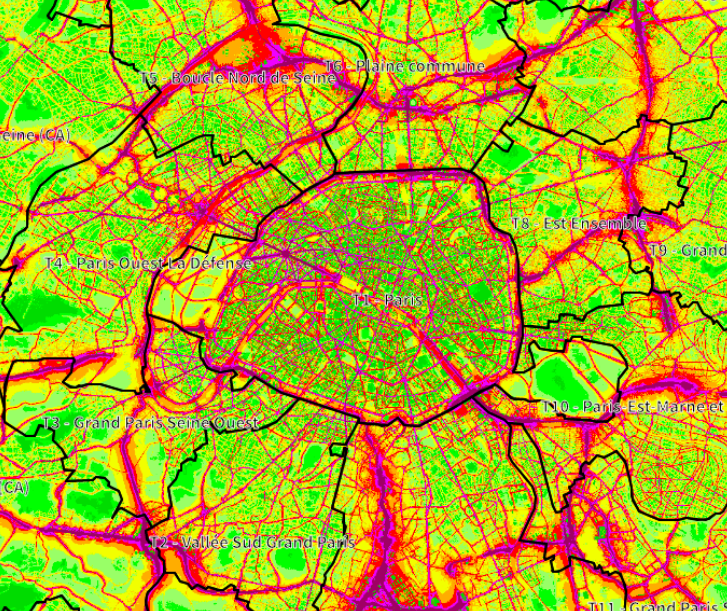

Towards better visualizations of urban sound environments: insights from interviews @ Inter-Noise

Urban noise maps and noise visualizations traditionally provide macroscopic representations of noise levels across cities. However, those representations fail at accurately gauging the sound perception associated with these sound environments, as perception highly depends on the sound sources involved. This paper aims at analyzing the need for the representations of sound sources, by identifying the urban stakeholders for whom such representations are assumed to be of importance. Through spoken interviews with various urban stakeholders, we have gained insight into current practices, the strengths and weaknesses of existing tools and the relevance of incorporating sound sources into existing urban sound environment representations. Three distinct use of sound source representations emerged in this study: 1) noise-related complaints for industrials and specialized citizens, 2) soundscape quality assessment for citizens, and 3) guidance for urban planners. Findings also reveal diverse perspectives for the use of visualizations, which should use indicators adapted to the target audience, and enable data accessibility.

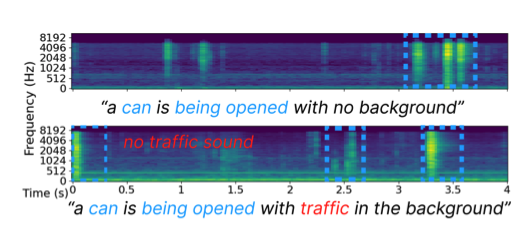

Challenge on Sound Scene Synthesis: Evaluating Text-to-Audio Generation @ NeurIPS Audio Imagination workshop

Despite significant advancements in neural text-to-audio generation, challenges persist in controllability and evaluation. This paper addresses these issues through the Sound Scene Synthesis challenge held as part of the Detection and Classification of Acoustic Scenes and Events 2024. We present an evaluation protocol combining objective metric, namely Fréchet Audio Distance, with perceptual assessments, utilizing a structured prompt format to enable diverse captions and effective evaluation. Our analysis reveals varying performance across sound categories and model architectures, with larger models generally excelling but innovative lightweight approaches also showing promise. The strong correlation between objective metrics and human ratings validates our evaluation approach. We discuss outcomes in terms of audio quality, controllability, and architectural considerations for text-to-audio synthesizers, providing direction for future research.

Model-based deep learning for music information research @ IEEE Signal Processing Magazine

We refer to the term model-based deep learning for approaches that combine traditional knowledge-based methods with data-driven techniques, especially those based on deep learning, within a differentiable computing framework. In music, prior knowledge for instance related to sound production, music perception or music composition theory can be incorporated into the design of neural networks and associated loss functions. We outline three specific scenarios to illustrate the application of model-based deep learning in MIR, demonstrating the implementation of such concepts and their potential.

STONE: Self-supervised tonality estimator @ ISMIR

Although deep neural networks can estimate the key of a musical piece, their supervision incurs a massive annotation effort. Against this shortcoming, we present STONE, the first self-supervised tonality estimator. The architecture behind STONE, named ChromaNet, is a convnet with octave equivalence which outputs a “key signature profile” (KSP) of 12 structured logits. First, we train ChromaNet to regress artificial pitch transpositions between any two unlabeled musical excerpts from the same audio track, as measured as cross-power spectral density (CPSD) within the circle of fifths (CoF). We observe that this self-supervised pretext task leads KSP to correlate with tonal key signature. Based on this observation, we extend STONE to output a structured KSP of 24 logits, and introduce supervision so as to disambiguate major versus minor keys sharing the same key signature. Applying different amounts of supervision yields semi-supervised and fully supervised tonality estimators: i.e., Semi-TONEs and Sup-TONEs. We evaluate these estimators on FMAK, a new dataset of 5489 real-world musical recordings with expert annotation of 24 major and minor keys. We find that Semi-TONE matches the classification accuracy of Sup-TONE with reduced supervision and outperforms it with equal supervision.

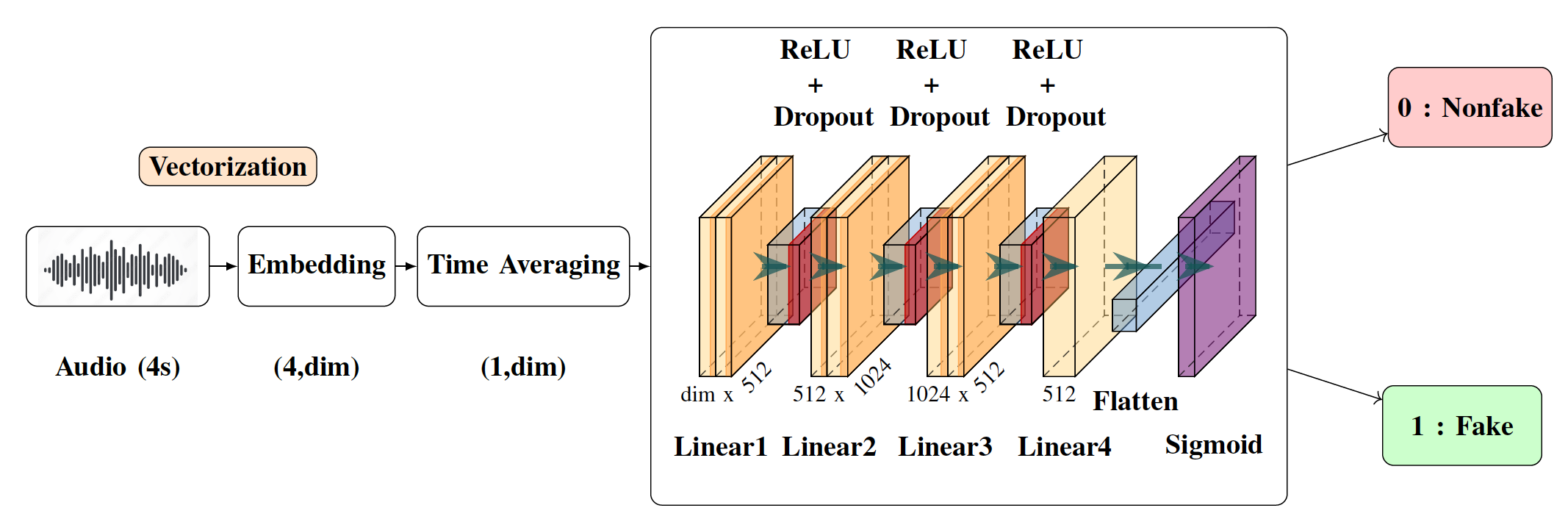

Detection of Deepfake Environmental Audio @ EUSIPCO

With the ever-rising quality of deep generative models,

it is increasingly important to be able to discern whether the

audio data at hand have been recorded or synthesized. Although

the detection of fake speech signals has been studied extensively,

this is not the case for the detection of fake environmental audio.

We propose a simple and efficient pipeline for detecting fake

environmental sounds based on the CLAP audio embedding. We

evaluate this detector using audio data from the 2023 DCASE

challenge task on Foley sound synthesis.

Our experiments show that fake sounds generated by 44 stateof-

the-art synthesizers can be detected on average with 98% accuracy.

We show that using an audio embedding trained specifically

on environmental audio is beneficial over a standard VGGish

one as it provides a 10% increase in detection performance. The

sounds misclassified by the detector were tested in an experiment

on human listeners who showed modest accuracy with nonfake

sounds, suggesting there may be unexploited audible features.