UPCOMING EVENTS

Création “in earth we walk” @ Halle 6

A live performance for voice, live electronics, and double bass. Created by Han Han

In earth we walk is a fleeting moment where voices become agents for constructing nature-inspired landscapes: voices utter semantically charged words conveying vivid scenarios; voices supply raw sonic material that are treated as pure sounds. The libretto is a six-stanza poem that unfolds a series of pictorial and psychological scenes, exploring themes of longing, awe and the reckoning with impermanence. Together, vocal emulations of clouds, torrent, winds, tides and sands weave into a sonic experience that evokes one’s multifaceted relationship with the many wonders and situations earth puts one in.

“Sensing the City Using Sound Sources: Outcomes of the CENSE Project” @ Urban Sound Symposium

OTHER NEWS

Towards multisensory control of physical modeling synthesis @ Inter-Noise

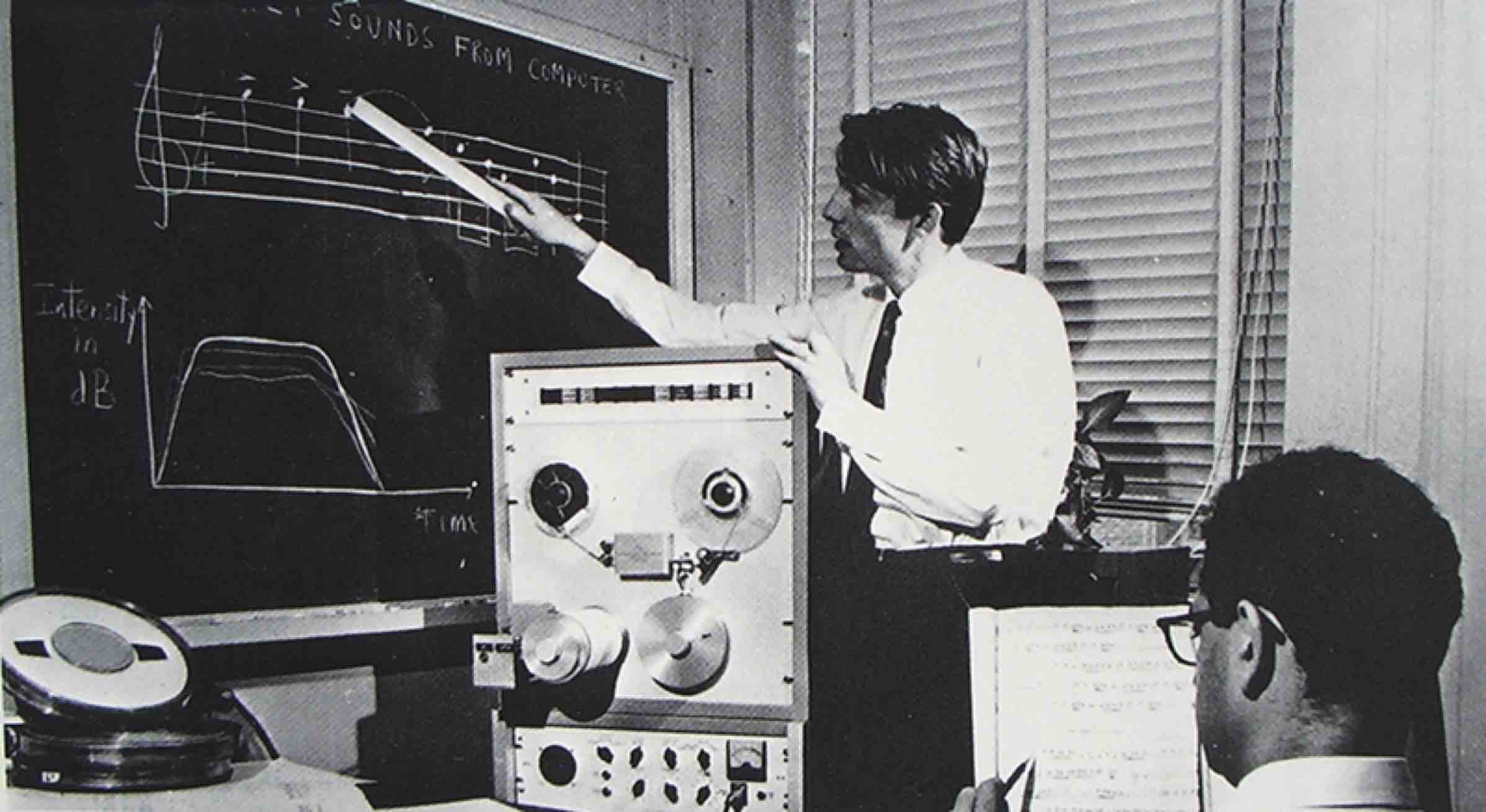

Structure Versus Randomness in Computer Music and the Scientific Legacy of Jean-Claude Risset @ JIM

According to Jean-Claude Risset (1938–2016), “art and science bring about complementary kinds of knowledge”. In 1969, he presented his piece Mutations as “[attempting] to explore […] some of the possibilities offered by the computer to compose at the very level of sound—to compose sound itself, so to speak.” In this article, I propose to take the same motto as a starting point, yet while adopting a mathematical and technological outlook, more so than a musicological one.

Instabilities in Convnets for Raw Audio @ IEEE SPL

PhD offer: Machine learning on solar-powered environmental sensors

Many biological and geophysical phenomena follow a near-periodic day-night cycle, known as circadian rhythm. When designing AI-enabled autonomous sensors for environmental modeling, this circadian rhythm poses both a challenge and an opportunity.

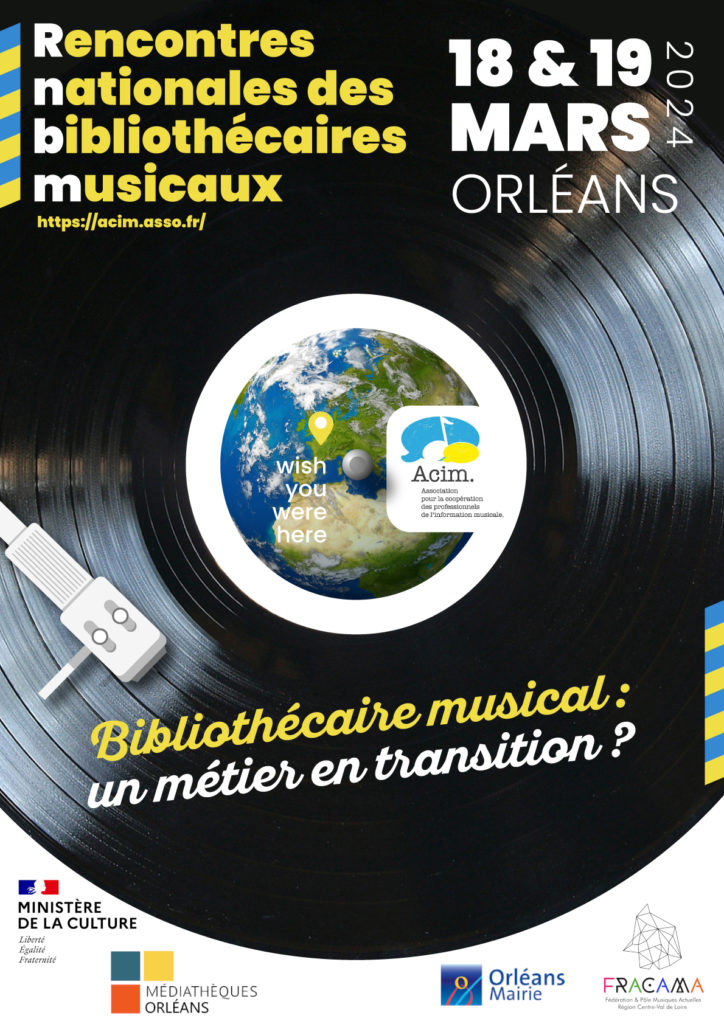

Bibliothécaire musical : un métier en transition ?

L’Association pour la Coopération des professionnels de l’Information musicale (ACIM) organise son congrès 2024 à Orléans. Le thème ce cette année est : “Bibliothécaire musical : un métier en transition ?”.

PhD offer: Developmental robotics of birdsong

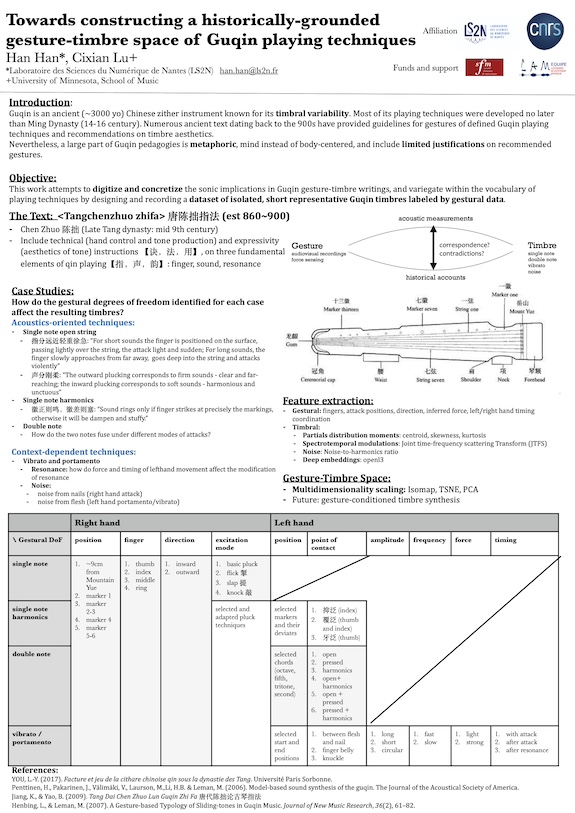

Towards constructing a historically grounded gesture-timbre space of Guqin playing techniques @ Timbre

Guqin is an ancient Chinese zither instrument known for its timbral variability and the vital role timbre, as opposed to melody or rhythm, played in its classical compositions. Numerous ancient texts dating back to the 1500s provided gestural guidelines of defined Guqin playing techniques and recommendations on timbre aesthetics. It’s also suggested in these texts that small deviations in gestures have significant impact on resulting timbres. Nevertheless, traditionally and even today, Guqin pedagogies are largely metaphoric, mind instead of body, and include limited elaboration on recommended gestures. To digitize and concretize the sonic implications in Guqin gesture-timbre writings, and variegate within the oversimplified vocabulary of playing techniques, this study aims to design and record a dataset of isolated, short, representative Guqin sounds labeled by gestural data. The sounds in question are curated by extracting ancient text, where emphasis on gesture-induced timbral difference is mentioned. We decompose the notion of gesture into nine degrees of freedom for both hands, including left/right hand position, fingers used, point of contact, left/right hand temporal coordination, etc. We define a ladder of gestural data at various levels, ranging from discrete labels of playing techniques, the aforementioned degrees of freedom to continuous signals acquired by high-speed camera with automatic hand-tracking system. We analyze in time-frequency domain timbres resulting from conventional playing gestures and their systematically “perturbed” versions. We investigate the correlation between timbres and their underlying gestures, via methods derived from multidimensional scaling.

8 février 2024 : “Les sens artificiels” au Stereolux

Le jeudi 8 février 2024 à 18h30 au Stereolux, dans le cadre de la Nuit blanche des chercheur-e-s de Nantes université.

L’intelligence artificielle (IA) révolutionne notre compréhension du vivant en utilisant les sens humains. Elle permet une analyse poussée de la parole, des signaux sonores et de la bioacoustique. En médecine, les sens peuvent être reproduits pour améliorer les diagnostics. Dans la nature, les sens peuvent être simulés pour améliorer la compréhension du vivant. Au cours de cette session animée par des expert·es renommé·es, explorez les avancées de l’IA pour la santé et le vivant du futur.

Mathieu, Vincent, and Modan present at DCASE

16 novembre 2023 : journée GdR ISIS “Traitement du signal pour la musique”

Dans le cadre de l’action « Traitement du signal pour l’audio et l’écoute artificielle » du GdR ISIS, nous organisons, le Jeudi 16 Novembre 2023 à l’IRCAM, une troisième journée dédiée au traitement des signaux de musique

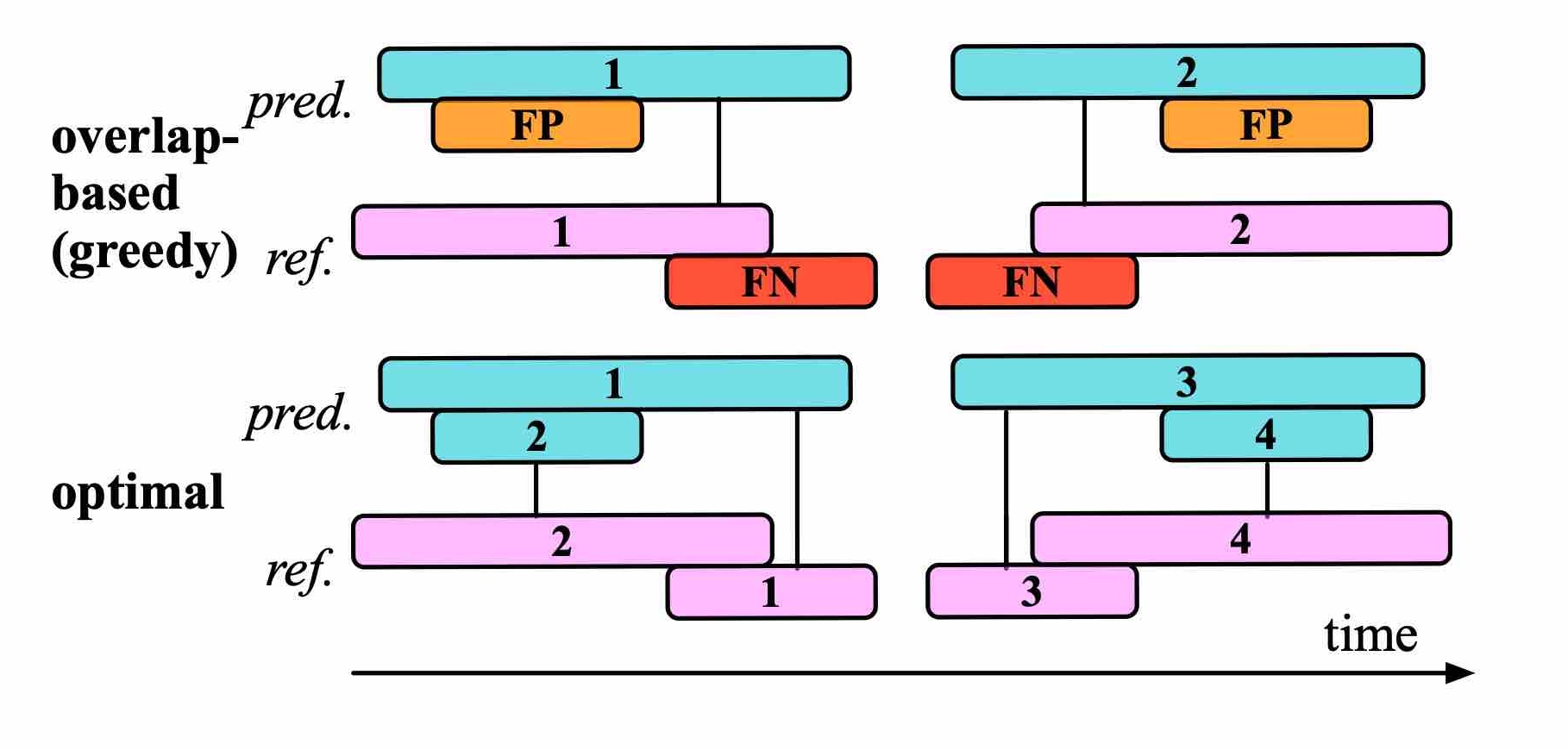

Efficient Evaluation Algorithms for Sound Event Detection @ DCASE

Our article presents an algorithm for pairwise intersection of intervals by performing binary search within sorted onset and offset times. Computational benchmarks on the BirdVox-full-night dataset confirms that our algorithm is significantly faster than exhaustive search. Moreover, we explain how to use this list of intersecting prediction-reference pairs for the purpose of SED evaluation.

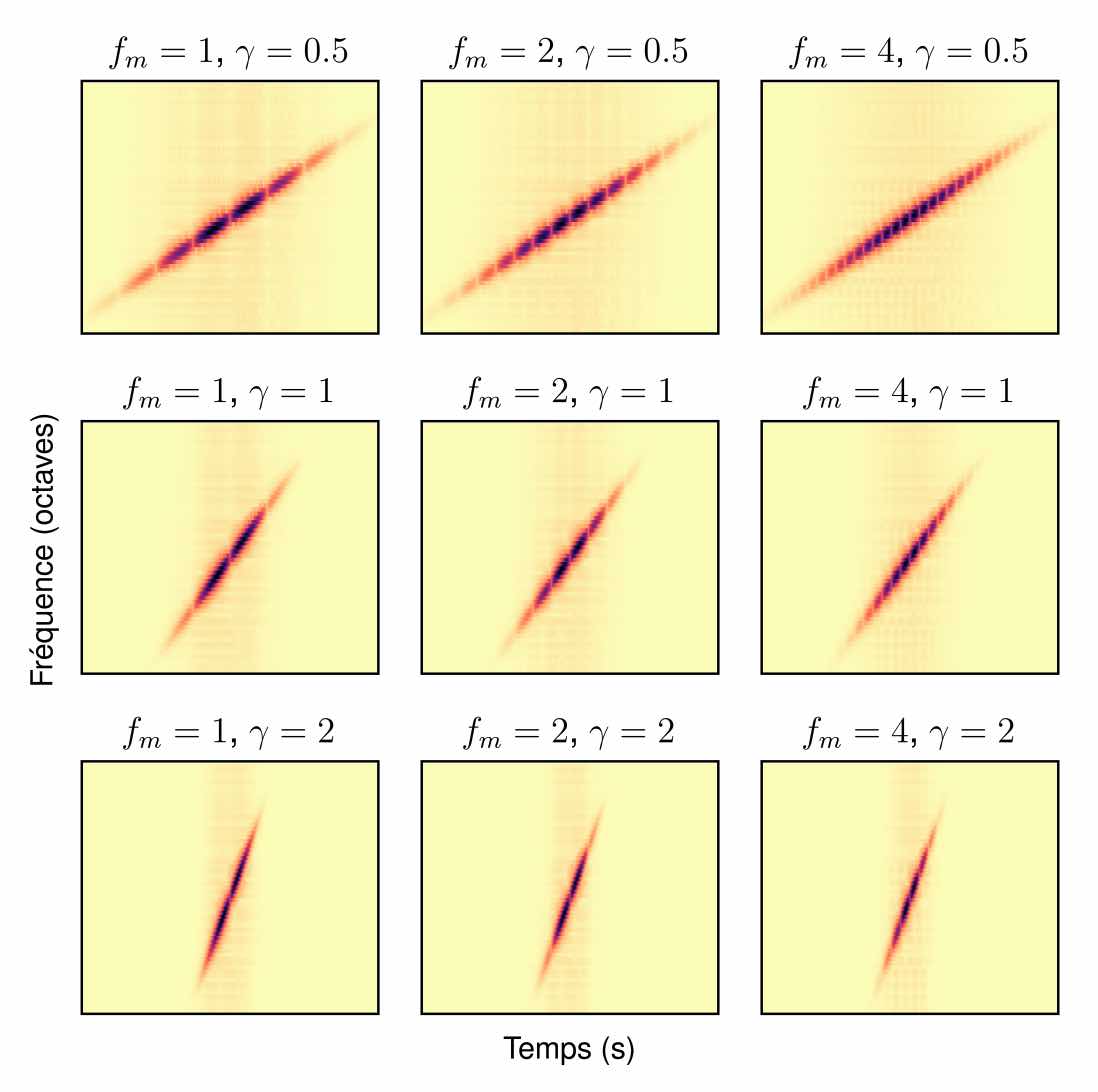

Apprentissage de variété riemannienne pour l’analyse-synthèse de signaux non stationnaires @ GRETSI

“Can Technology Save Biodiversity?” Call for papers

Foley sound synthesis at the DCASE 2023 challenge

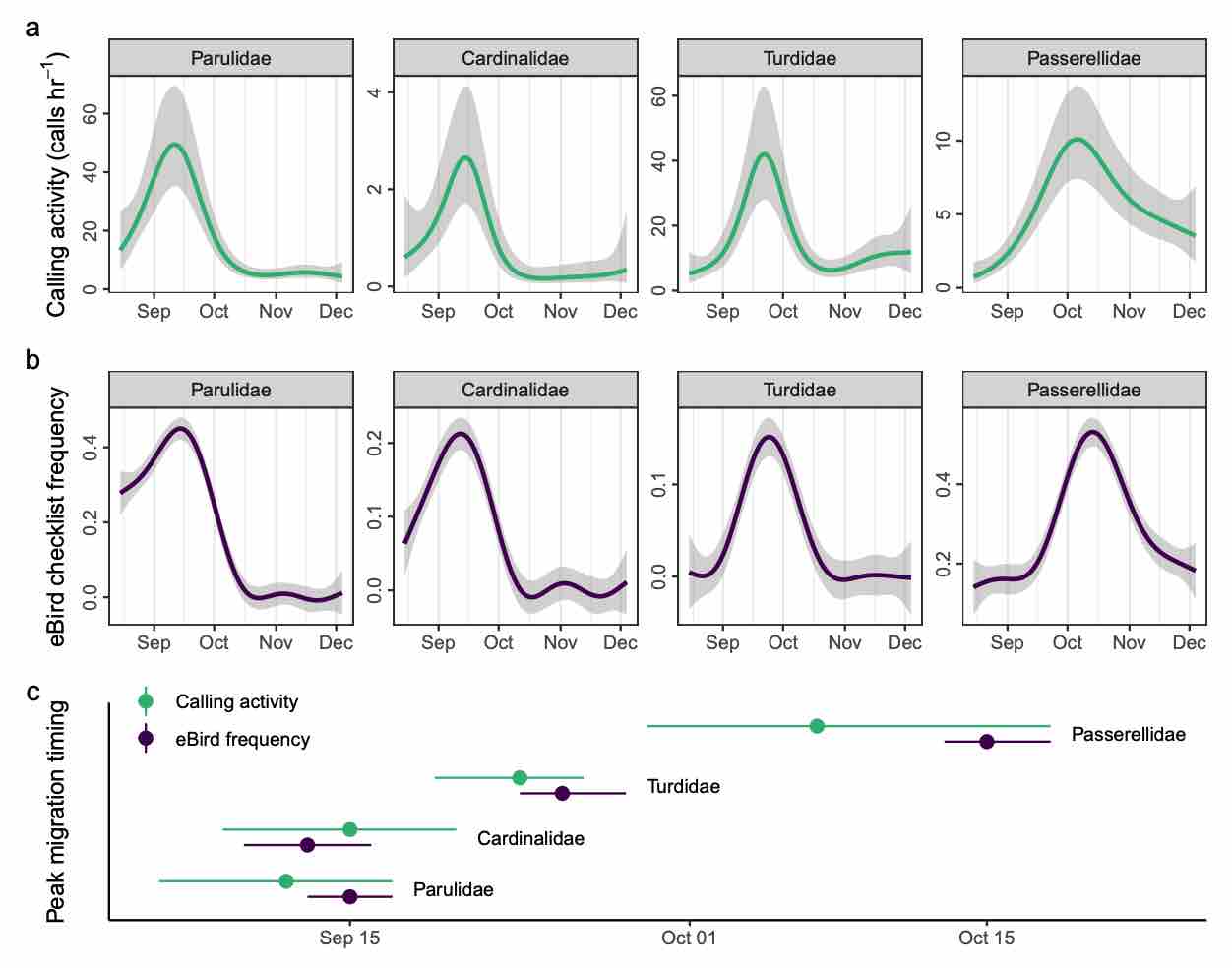

Automated acoustic monitoring captures timing and intensity of bird migration @ J. Applied Ecology

Monitoring small, mobile organisms is crucial for science and conservation, but is technically challenging. Migratory birds are prime examples, often undertaking nocturnal movements of thousands of kilometres over inaccessible and inhospitable geography. Acoustic technology could facilitate widespread monitoring of nocturnal bird migration with minimal human effort. Acoustics complements existing monitoring methods by providing information about individual behaviour and species identities, something generally not possible with tools such as radar. However, the need for expert humans to review audio and identify vocalizations is a challenge to application and development of acoustic technologies. Here, we describe an automated acoustic monitoring pipeline that combines acoustic sensors with machine listening software (BirdVoxDetect). We monitor 4 months of autumn migration in the northeastern United States with five acoustic sensors, extracting nightly estimates of nocturnal calling activity of 14 migratory species with distinctive flight calls. We examine the ability of acoustics to inform two important facets of bird migration: (1) the quantity of migrating birds aloft and (2) the migration timing of individual species. We validate these data with contemporaneous observations from Doppler radars and a large community of citizen scientists, from which we derive independent measures of migration passage and timing. Together, acoustic and weather data produced accurate estimates of the number of actively migrating birds detected with radar. A model combining acoustic data, weather and seasonal timing explained 75% of variation in radar-derived migration intensity. This model outperformed models that lacked acoustic data. Including acoustics in the model decreased prediction error by 33%. A model with only acoustic information outperformed a model comprising weather and date (57% vs. 48% variation explained, respectively). Acoustics also successfully measured migration phenology: species-specific timing estimated by acoustic sensors explained 71% of variation in timing derived from citizen science observations. Our results demonstrate that cost-effective acoustic sensors can monitor bird migration at species resolution at the landscape scale and should be an integral part of management toolkits. Acoustic monitoring presents distinct advantages over radar and human observation, especially in inaccessible and inhospitable locations, and requires significantly less expense. Managers should consider using acoustic tools for monitoring avian movements and identifying and understanding dangerous situations for birds. These recommendations apply to a variety of conservation and policy applications, including mitigating the impacts of light pollution, siting energy infrastructure (e.g. wind turbines) and reducing collisions with structures and aircraft.